PDF version of this entire document

PDF version of this entire document

With a single diffusion-based ring around the nose we can get a recognition rate of about 80%. This improves considerably when more rings and reference points are added, so the results so far should be treated as proof of concept or exploratory at best.

The figures show a similar approach with geodesic rings, which gave recognition rate of more than 95%. The challenging thing is adapting diffusion masks to the task at hand. We are running experiments in order to learn which parameter values work best.

According to Wikipedia on http://en.wikipedia.org/wiki/Diffusion_wavelets Diffusion waveletsDiffusion wavelets, [d]iffusion wavelets are a fast multiscale framework for the analysis of functions on discrete (or discretized continuous) structures like graphs, manifolds, and point clouds in Euclidean space. Diffusion wavelets are an extension of classical wavelet theory from harmonic analysis. Unlike classical wavelets whose basis functions are predetermined, diffusion wavelets are adapted to the geometry of a given diffusion operator T (e.g., a heat kernel or a random walk). Moreover, the diffusion wavelet basis functions are constructed by dilation using the dyadic powers (powers of two) of T. These dyadic powers of T diffusion over the space and propagate local relationships in the function throughout the space until they become global. And if the rank of higher powers of T decrease (i.e., its spectrum decays), then these higher powers become compressible. From these decaying dyadic powers of T comes a chain of decreasing subspaces. These subspaces are the scaling function approximation subspaces, and the differences in the subspace chain are the wavelet subspaces.

If we are using diffusion geometry, we should also compare descriptors and not only distances. A closer look at the code will hopefully make it clear what comprises the diffusion wavelet basis functions (or equivalent/s). So far it has been used through the interfaces merely for masking purposes.

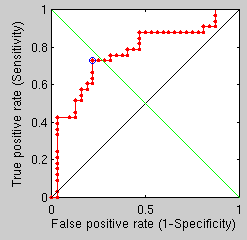

We spent a couple of days looking at descriptors and how they can be used to efficiently distinguish between surfaces representing faces. A dense, brute-force operation did not work well, so the path explored at the moment looks at taking just few interesting features like eyes and nose tips, then measuring the spectral distance between those. The ROC curve shows the result of a crude comparison - measuring the distance between two points only. As more such distances are aggregated performance (accuracy) ought to improve.

Taking two spectral distances between landmark points yields a similar performance, so a denser distances map will be implemented.

|

The number of anatomically meaningful points that can be reliably extracted from a 3-D image is limited, so even by using the spectral distance between all of those to measure intrinsic differences does not make up a powerful enough discriminant. In essence, more experiments were run where differences in spectral distances - to to speak - were measured, raised to the power of two and aggregated (summation) to give a figure of merit. Getting recognition rates at the rate of 90% or above is still extremely hard, no matter the adjustments made.

Arbitrarily aligning and drawing analogous points from a grid would not work well both for practical and theoretic limitations, such as the fact that we are not guaranteed to measure the same anatomical points while moving from one image to another. With fiducial it might be another matter altogether.

A different approach is going to be explored rather than time being spent under the premise that subtitling geodesics with wave or heat kernels will, on its own, improve the results considerably. Exact geodesics seemed like a theoretically sounds substitution, but the existing implementation of them cannot be trivially run on the computational servers.

Many measurements on the surface do work, but they are not always accurate enough and robust enough to anatomical variation. More points could be obtained by running common edge detection operators on the photometric part, but then it becomes a 2D+3D problem.

Roy Schestowitz 2012-01-08